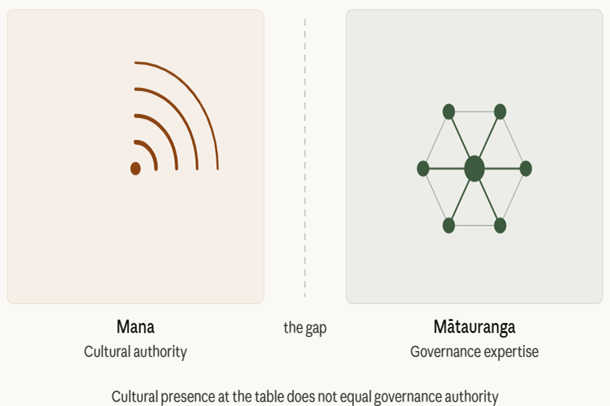

There is a pattern in New Zealand’s AI and data governance landscape that is rarely named directly, but it is immediately recognisable to anyone who has worked in this space. When organisations, government agencies, technology companies, universities, and research institutions decide they need Māori input into their AI and Data ethics frameworks, they do not, as a rule, seek out Māori experts with formal qualifications in digital and data governance, data sovereignty, law, or technology ethics. They seek out Māori who are culturally credible whose mana within Māori society is genuine and respected, but whose engagement with the technical, legal, and governance dimensions of AI systems is limited or entirely absent. The result is ethics documents that carry the cultural weight of Māori participation without the substantive expertise required to make that participation meaningful.

This is not a criticism of the Māori individuals who participate in these processes. Their cultural knowledge is often real, and in many cases they are not fully informed about the technical scope of what they are being asked to endorse. The criticism belongs to the organisations that design these processes. Asking a Māori representative with no background in algorithmic systems to review a data use policy does not constitute Māori oversight of that policy. These practices conflate cultural legitimacy with technical authority, and in doing so they produce something more dangerous than no Māori input at all: the appearance of informed Māori consent where no such consent exists.

Māori professionals with formal expertise in digital governance, data law, AI ethics, and technology policy are few in number, a direct consequence of the persistent structural barriers that have limited Māori participation in tertiary education and the technology sector for generations. That scarcity is then exploited, whether consciously or not, to substitute cultural participation for technical expertise. It is considerably easier, faster, and cheaper to convene a hui with community representatives than to engage the small number of Māori professionals who have the qualifications to provide genuine technical and governance oversight. It also produces less friction. A Māori person with technical skills asked to speak to the cultural dimensions of a data framework is unlikely to raise the same challenging questions about algorithmic bias, data sovereignty law, or Treaty obligations that a formally qualified Māori governance expert would.

What this pattern produces, in practice, is a double exclusion. Māori communities are denied genuine representation by qualified experts who understand the technical systems affecting them. And the Māori professionals who do hold that expertise are systematically bypassed in favour of cultural representatives whose participation can be more easily managed. This is the management of Māori input in a way that satisfies the appearance of consultation while ensuring that no one in the room has the knowledge or authority to fundamentally challenge what is being proposed. Te Tiriti demands something categorically different: not the presence of Māori faces at a table designed by others, but the genuine exercise of tino rangatiratanga by those with both the cultural standing and the technical expertise to exercise it with effect.

Whakapapa

The illustration uses a koru spiral in warm sienna and terracotta on the left to represent mana and cultural authority, and a hexagonal governance network in deep forest green on the right to represent mātauranga and technical expertise — with a dashed line marking the gap between the two. The earthy palette draws on the colours of whenua and ngahere, grounding the concept in a distinctly New Zealand visual language. The image was designed using Claude.ai.

Another paper

I have authored a paper Why Computer Engineers and Developers — and in Particular Indigenous and Minority Leaders and Media Commentators — Should Not Write AI Ethical Guidelines that looks at this ever growing international issue.