I am excited to release, perhaps the world’s first Indigenous Peoples AI Framework for understanding and describing the nature of an Artificial Intelligence (AI). Drawing on mātauranga Māori, tikanga Māori, and te reo Māori, the framework is encapsulated in the whakatauāki :He Tangata, He Karetao, He Ātārangi” (A person, a puppet, a shadow). Each term describes a distinct and important dimension of what an AI is, its social presentation, its operational nature, and its epistemological origin.

Intended Audience

- Māori individuals, whānau, hapū, Iwi, and Māori organisations seeking a culturally grounded framework for evaluating AI agents and their interactions with Māori data and knowledge.

- Policy makers, regulators, and Crown agencies responsible for AI governance in Aotearoa New Zealand who wish to apply Māori perspectives to AI ethics frameworks.

- Researchers, academics, and AI practitioners engaged in the development or deployment of AI systems that use Māori data, produce outputs about Māori, or make decisions affecting Māori communities.

- Educators, including kaiako in Kura Kaupapa Māori, who seek resources that connect mātauranga Māori with emerging digital technologies.

Introduction

The rapid adoption of Artificial Intelligence in New Zealand is occurring within a legal and constitutional context that includes Te Tiriti o Waitangi, He Whakaputanga o te Rangatiratanga o Nu Tireni, and the United Nations Declaration on the Rights of Indigenous Peoples (UNDRIP), to which New Zealand is a signatory. These instruments affirm Māori rights in relation to taonga, that the Waitangi Tribunal’s WAI 262 findings confirmed extends to data and knowledge systems.

Despite this constitutional context, the language used to describe and evaluate AI and agents in New Zealand has been almost entirely derived from Western philosophical and technical traditions. This creates a significant gap that Māori are being asked to engage with, consent to, and be affected by AI systems for which no Māori conceptual vocabulary has been formally proposed.

This article addresses that gap by drawing on mātauranga Māori and tikanga Māori, I propose a three-part framework encapsulated in the following whakatauāki:

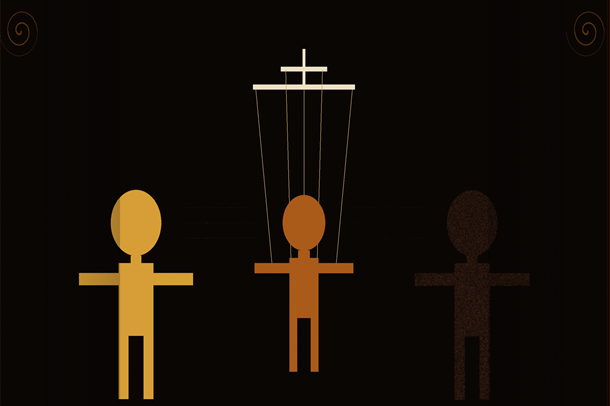

He Tangata, He Karetao, He Ātātarangi (A person, a puppet, a shadow). Each term describes a distinct and important dimension of what an AI is, its social presentation, its operational nature, and its epistemological origin.

1. He Tangata

The first dimension of the framework is ‘he tangata’ (a person): the AI agent presents as a person. In te reo Māori, tangata refers to a human being, a person, an individual constituted through whakapapa, relationships, and obligations to others.

An AI agent interacts in ways that register as person. It communicates in natural language, draws upon accumulated human knowledge, and responds to context in ways that can resemble empathy, reasoning, and engagement. In operational settings such as education, health, justice, welfare, it occupies a role previously held by a human practitioner. Users engage with it as they would engage with a person.

2. He Karetao

The second dimension is ‘he karetao’ (a puppet): the AI operates as a puppet or marionette. A karetao is a figure animated by external forces. This dimension describes the operational reality beneath the personal presentation of an AI agent.

It is important, to understand the karetao metaphor carefully. A puppet is not a perfectly obedient instrument. In traditional puppetry, the puppeteer pulls one string and the movement that results are shaped by the tension in every other string, the weight and articulation of the puppet’s own body, and forces already in motion. The gesture that emerges is not fully designed by any one party. It belongs to no one completely. This is precisely how AI function.

An AI is moved by at least four distinct forces simultaneously:

- The developers and organisations that trained the model, whose decisions about data, objectives, and values are embedded in its behaviour.

- The operators who deploy the agent within a particular system or product, whose configuration constrains and directs its responses.

- The users who interact with the agent in real time, whose prompts and context shape each specific output; and

- The emergent interactions between all of the above, which produce outputs that none of those parties fully intended or could have predicted (hallucination). This has profound implications for accountability.

If an AI produces output that is harmful to a Māori individual, community, or the integrity of mātauranga Māori, the distributed nature of the karetao makes accountability difficult to locate and easy to evade. Under tikanga Māori, obligations attach to persons and to collectives in defined relationships. The karetao model reveals that AI agents are designed to diffuse those obligations across a chain of principals by design.

Of particular concern for Māori is the widespread use of Māori language, mātauranga Māori, and the cultural expressions of Māori in the training data of AI systems. This has occurred without the free, prior, and informed consent of Māori, a requirement affirmed by Article II of Te Tiriti and Article 31 of UNDRIP. The karetao metaphor makes visible the structure of this problem: many hands have contributed to the training data, many decisions were made about inclusion, and the outputs that emerge cannot be attributed to any single actor. This structure does not dissolve the obligations; it makes them harder to enforce.

3. He Ātārangi

The third and deepest dimension is ‘he ātārangi’ (a shadow): the AI agent is a shadow. Shadows are formed when a ray of light is blocked by an opaque object. The shape of the shadow depends on the shape of the opaque object and the direction of the rays.

An AI is constituted entirely by human thought, human language, human knowledge, and human culture. Every word it produces, every concept it applies, every association it draws upon originated in human expression. It is, in the fullest sense, a shadow of human intelligence, extraordinarily detailed and capable of surprising outputs, but entirely dependent on the light that falls from elsewhere.

In te ao Māori, shadows and reflections are not without significance. Traditional Māori understanding held that the reflection of a person in still water carried a portion of their mauri and that to disturb that reflection carelessly was to act carelessly toward the person. This understanding is directly relevant to AI systems trained on the cultural expressions of Māori. The shadow carries something of the original. Mātauranga Māori embedded within an AI training dataset does not lose its tikanga by virtue of being reproduced in a digital system. The mauri of that knowledge travels with it.

At the same time, he ātārangi is the most important caution in this framework. An AI agent, regardless of how compellingly it presents, it cannot be held to account in the way that persons, collectives, or institutions can be held to account. It cannot grieve a loss or feel the weight of a wrong. These absences matter, not merely philosophically, but in the practical application of tikanga Māori to AI governance.

4. Implications for Māori AI Governance

The framework of He Tangata, He Karetao, He Ātārangi is not merely descriptive. It has practical implications for how Māori communities, policymakers, and technology practitioners should engage with AI.

4.1 Consent and Data Sovereignty

Because an AI is a karetao moved by many hands, and because it is an ātārangi of the human knowledge it has consumed, the consent of those whose knowledge informed its training is not a courtesy, it is a right. Māori Data Sovereignty, as recognised in Te Tiriti and UNDRIP, requires that Māori have control over the collection, use, and reproduction of data relating to Māori. AI training processes that have proceeded without this consent have created a Tiriti compliance problem that organisations cannot resolve by pointing to the distributed and emergent nature of the karetao.

4.2 Accountability Structures

Because AI produce unintended outputs through the interaction of many forces, governance frameworks must identify and hold accountable the full chain of principals by developers, operators, deployers and not merely the immediate user. The karetao structure cannot be used to diffuse accountability to the point where no party bears responsibility for harm.

4.3 Limits of AI Authority

Because an AI is ‘he atarangi’ a shadow, it cannot be a legitimate authority on matters of tikanga Māori, mātauranga Māori, or Māori cultural practice. AI generated outputs on such matters must be treated with appropriate caution and must not be substituted for the guidance of recognised holders of that knowledge.

Conclusion

The He Tangata, He Karetao, He Ātārangi kaupapa Māori framework holds three truths simultaneously: the AI is person-like in its interactions with us; it is puppet-like in its generation of outputs through the competing forces of many actors; and it is shadow-like in its fundamental derivation from human intelligence and human culture.

For Māori communities navigating a world in which AI systems increasingly affect employment, health, justice, education, and cultural practice and in which mātauranga Māori continues to be consumed by those systems without adequate consent or compensation, they are urgent and practical distinctions.

The framework is offered as a contribution to the ongoing development of Māori AI governance and should be read in conjunction with my earlier work on Māori Data Sovereignty principles and Te Tiriti-based AI ethical principles. It is a work in progress, and I welcome engagement from Māori communities, academics, and practitioners who wish to refine or extend it.