As AI systems grow more capable, companies appear increasingly willing to look beyond traditional engineering disciplines for guidance on questions that touch on consciousness, identity and what it means to interact meaningfully with a machine.

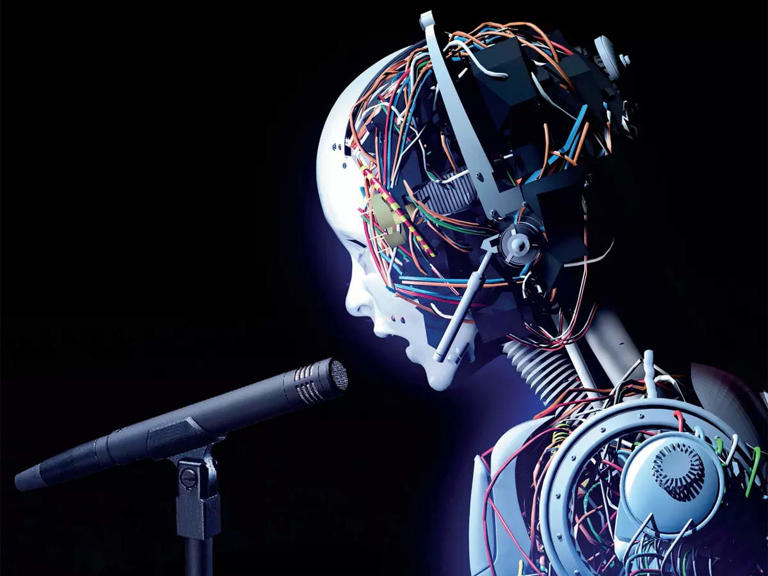

On 13 April 2026, Google DeepMind announced it had hired philosopher Henry Shevlin to study machine consciousness, human AI relationships, and AGI readiness. The move was framed as surprising, but not likely for many Indigenous Peoples. The media article is here.

For decades, I and others have argued that AI governance can’t be left to engineers and big tech companies, but that frameworks need to be grounded in tikanga Māori, Te Tiriti o Waitangi, AI legal personhood, and Māori/Indigenous data sovereignty as these already address the questions Big Tech is only now beginning to ask. These frameworks start from a simple premise that knowledge creates relationships, those relationships create obligations, and entities formed from knowledge require governance.

The issue is not whether AI can be conscious, a version of philosophy’s “hard problem” but whether companies will engage with existing Indigenous knowledge systems or attempt to rebuild them from a Western perspective while continuing extractive practices.

The Waitangi Tribunal’s WAI 2522 finding affirms Māori data as taonga under Te Tiriti. AI systems built on that data are also taonga, subject to Māori governance. Similarly, applying tikanga Māori shows that AI systems carry mauri shaped by their data and relationships. Protecting that mauri requires governance from the outset, not after deployment.

This is part of a global pattern where Indigenous communities are increasingly recognising non-human entities as legal persons, reflecting longstanding relational worldviews. Meanwhile, the same tech industry that has drawn on the theft of Indigenous data is now turning to philosophy to define what it has built.

Meaningful change requires engaging Indigenous governance frameworks at the foundation of AI design.

Big Tech’s recognition that AI raises philosophical questions is overdue but incomplete. The deeper issue is not what AI is, but what relationships it embodies and what obligations follow. Indigenous knowledge systems have long addressed this. The challenge now is whether the technology sector is willing to listen.