The past few days we are hearing about AI and recruitment issues with you a young man who faced bias. I am increasingly concerned that the media is not giving Māori any consideration with their AI bias stories, relying on academics for opinions and for them to talk about us like we are not capable of speaking up for ourselves.

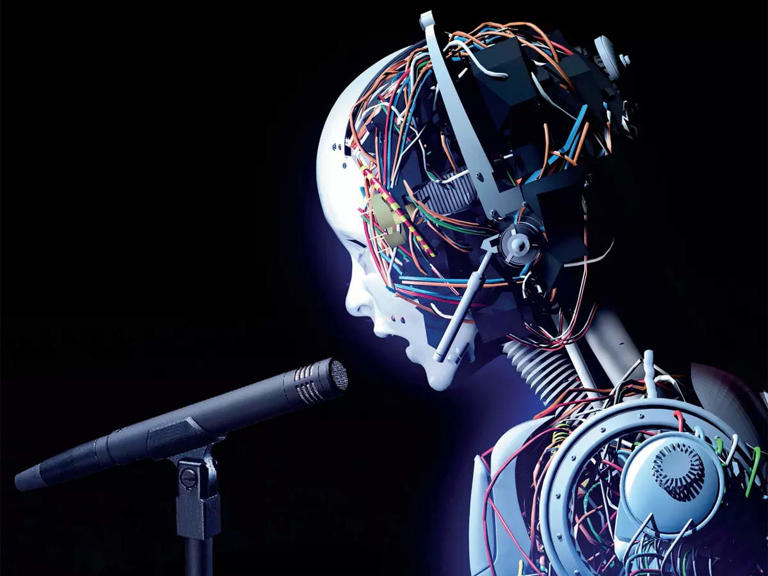

With the increasing adoption of artificial intelligence (AI) in recruitment and candidate interview processes presents significant and underexamined risks for Māori and other indigenous peoples globally. As organisations across New Zealand and internationally deploy AI-powered tools to screen applications, conduct video interviews, and rank candidates, the structural inequities embedded within these systems threaten to reinforce and, in some cases, deepen existing disparities in employment outcomes for indigenous communities.

This article examines the principal impacts of AI-mediated hiring on Māori, situating those impacts within the frameworks of Te Tiriti o Waitangi, Māori data sovereignty, and indigenous rights as affirmed by the United Nations Declaration on the Rights of Indigenous Peoples (UNDRIP).

Algorithmic Bias and Underrepresentation in Training Data

AI recruitment tools learn from historical datasets. These datasets reflect the demographic composition of past hiring decisions shaped by decades of structural discrimination; the resulting algorithms encode and perpetuate those same biases at scale.

Research consistently demonstrates that when training data is incomplete, unrepresentative, or culturally biased, AI systems produce inaccurate predictions and perpetuate harmful stereotypes. Māori communities face particular exposure because their data is chronically underrepresented or misinterpreted within mainstream datasets. AI recruitment tools developed in the United States or Europe, the dominant source markets for such products are almost certainly trained on datasets in which Māori, Pasifika, and other indigenous peoples have negligible representation.

Studies on algorithmic bias in hiring have found that candidates with names associated with ethnic minorities receive significantly fewer interview offers than equivalently qualified candidates with names associated with the majority population. AI tools that score resumes, rank candidates, or assess video interview responses operate within this same biased paradigm.

Communication, Language, and Cultural Expression

AI interview platforms increasingly assess candidates not only on the content of their responses, but on vocal tone, pacing, facial expression, eye contact, and emotional register. These assessments are calibrated against norms derived from majority population training data and reflect Western, often North American, professional communication conventions.

This methodology is structurally disadvantageous to Māori candidates and speakers of te reo Māori for several reasons:

- Candidates who speak te reo Māori as a first language, or who switch between te reo and English, may be assessed inaccurately by speech recognition and natural language processing systems not trained on te reo or its associated English language patterns.

- Tikanga Māori encompasses distinct communicative practices including considered pauses before responding, indirect expression, and collective rather than individualist framing that AI systems may misinterpret as hesitation, lack of confidence, or disengagement.

- Indigenous non-verbal communication practices may not align with the behavioural benchmarks against which AI video interview tools measure candidates, resulting in systematically lower scores for no reason related to a candidate’s actual capability.

Facial and Biometric Analysis

Several AI interview platforms deploy facial recognition and affective computing technologies to analyse candidates’ emotional states and suitability during video interviews. The limitations of such technologies for non-white populations are well established in the academic literature. Facial recognition systems have consistently demonstrated substantially lower accuracy for women and for individuals with darker skin tones compared with white male subjects.

Where AI interview tools apply facial or biometric analysis to Māori or Pasifika candidates, the risk of inaccurate or discriminatory assessment is correspondingly elevated. This is particularly concerning given the opacity of these systems: candidates are rarely informed of how their biometric data is being used, what thresholds determine a favourable or unfavourable outcome, or whether any human review of automated decisions occurs.

Transparency, Accountability, and the Right to Explanation

A defining characteristic of AI recruitment tools is their opacity. The algorithmic processes that determine whether a candidate progresses or is eliminated are, in most cases, inaccessible to the candidate, undisclosed by the vendor, and unaudited by any independent body.

There is currently no standardised test for identifying when an AI recruitment tool is producing discriminatory outcomes. Vendors may conduct internal testing and auditing, but such results are rarely made publicly available. Independent external auditing remains uncommon. Job seekers, including Māori candidates have no meaningful avenue through which to challenge an automated assessment or seek an explanation for an adverse outcome.

Candidates have a legitimate right to understand the basis on which consequential decisions about their employment prospects are made. The black box nature of AI recruitment tools directly undermines that right.

Te Tiriti o Waitangi and Māori Data Sovereignty

In the context of New Zealand, the deployment of AI hiring tools intersects with obligations arising under Te Tiriti o Waitangi. The Crown and, by extension, organisations operating within its jurisdiction carry obligations of active protection toward Māori. The use of AI systems that demonstrably produce disparate and adverse outcomes for Māori candidates is inconsistent with those obligations.

Māori data sovereignty is the right of Māori communities to own, control, access, and govern data that derives from and pertains to them and is foundational to any equitable engagement with AI. Biometric, behavioural, and linguistic data gathered from Māori candidates during AI-mediated interviews are Māori data. Without adequate governance frameworks grounded in tikanga and Te Tiriti, such data may be used for purposes that violate Māori autonomy, repurposed for commercial gain, or incorporated into training datasets in ways that further entrench algorithmic bias against future Māori applicants.

The principle of kaitiakitanga (guardianship and active stewardship) extends naturally into the digital domain. Organisations with a genuine commitment to Te Tiriti must apply that stewardship ethic to the AI systems they deploy, ensuring those systems do not become instruments of discrimination dressed in the language of efficiency and objectivity.

The Risk of Entrenching Systemic Inequity

Employment is a domain in which Māori already experience significant disparity relative to the non-indigenous population. AI recruitment tools that screen out Māori candidates at the earliest stages of the hiring process before any human interviewer can assess their experience, cultural capability, or potential risk compounding those disparities in ways that are invisible, uncontested, and rapidly normalised.

The speed and scale at which AI systems operate means that discriminatory effects, once embedded, are replicated across thousands of hiring decisions before they are identified. Unlike a biased human interviewer, an AI system does not tire, reconsider, or respond to feedback. It applies the same flawed logic consistently, at volume, without conscience.

Towards an Equitable Framework

Addressing the risks identified above requires action at multiple levels: by AI vendors, by organisations deploying these tools, by regulators, and by Māori and indigenous communities themselves.

For organisations using AI hiring tools, minimum requirements should include:

- Conducting a human rights and cultural/Te Tiriti impact assessment prior to deployment, with specific consideration of impacts on Māori and Pasifika candidates.

- Requiring vendors to provide transparent disclosure of training data composition, algorithmic logic, and independent audit results.

- Ensuring that no candidate is eliminated solely based on an automated assessment without human review.

- Engaging meaningfully with Māori communities, not in a consultative capacity only, but with genuine decision-making authority over how such tools are used.

For regulators, there is an urgent need for binding legislative frameworks and updates to the Algorithm Charter, that require pre deployment auditing of AI recruitment tools for disparate impact, mandate transparency with candidates about how automated systems are used and establish enforceable rights to explanation and redress.

For Māori communities, the development of Māori led AI governance frameworks grounded in principles such as kaitiakitanga, tikanga, and the CARE Principles for Indigenous Data Governance (Collective Benefit, Authority to Control, Responsibility, Ethics) offers a pathway to asserting data sovereignty in this domain. Initiatives such as Te Hiku Media’s kaitiakitanga licensing model demonstrate that Māori communities can and should govern the conditions under which their data is used in AI systems.

Conclusion

The adoption of AI in candidate interviewing is a consequential shift in the exercise of institutional power over employment opportunities one that, in its current form, carries material risks of discrimination against Māori. Those risks arise from the structural biases embedded in training data, the cultural assumptions encoded in assessment algorithms, and the near total absence of transparency, accountability, and regulatory oversight.

Addressing these risks should not optional for organisations operating in New Zealand. It is a Te Tiriti obligation, a human rights imperative, and a prerequisite for any credible commitment to equity and inclusion in the workplace.

Documented Cases of Algorithmic Bias in AI Hiring

ChatGPT AI generated and human fact checked.

Case 1 — Mobley v. Workday, Inc. (US, 2023–ongoing)

Status: Ongoing class action

In 2023, Derek Mobley filed a lawsuit against Workday, a company that provides AI tools used by employers to screen job applicants. Mobley — who is Black, over 40, and has a disability — said he applied for over 100 jobs through companies using Workday’s system and got rejected every single time, often within hours.

He argued that the AI screening tool was unfairly filtering people out based on race, age, and disability, which would break US anti-discrimination laws.

Workday tried to get the case dismissed, saying they’re just a software provider, not an employer. But the court didn’t accept that. The judge said Workday’s system basically acts like a gatekeeper in hiring, so it can still be held responsible — even though it’s automated.

In May 2025, the court allowed part of the case (age discrimination) to move forward as a nationwide class action, potentially covering millions of applicants over 40. The race and disability claims are still part of the case but haven’t been approved as class actions yet.

The case is still ongoing, and nothing has been proven yet — Workday denies the claims.

Why this matters:

This case suggests that companies making AI hiring tools could be legally responsible if those tools discriminate — even if they’re not the employer. That idea could easily apply in places like New Zealand too.

Case 2 — Amazon’s Experimental AI Hiring Tool (US, 2014–2017)

Status: Shut down (never officially used in hiring)

Amazon built an AI tool to help sort job applications by ranking candidates from 1 to 5 stars. It was mainly for technical roles like software engineering.

But by 2015, they found a big problem: the system preferred male candidates. Why? Because it was trained on past hiring data — and most of those past hires were men. So the AI basically learned that “male = better candidate.”

It even penalised résumés that included words like “women’s” (e.g. women’s organisations) and downgraded graduates from women-only colleges.

Amazon tried to fix it but couldn’t be sure the bias was fully gone, so they scrapped the project in 2017. The story became public in 2018.

Amazon says the tool was never actually used in real hiring decisions, but it still clearly showed how bias can creep into AI systems.

Why this matters:

AI doesn’t start neutral — it learns from history. If the past is biased, the AI will be too. That’s especially concerning for groups who’ve historically been underrepresented, because the system can end up reinforcing those patterns.

Case 3 — Baker v. CVS Health Corporation (US, 2023–2024)

Status: Settled (details private)

In 2023, Brendan Baker sued CVS over its use of an AI-powered video interview system.

Here’s how it worked: candidates recorded video interviews, and AI analysed things like facial expressions, tone of voice, and eye contact. It then gave each person a score based on “employability” or “cultural fit.”

The lawsuit argued this might break a Massachusetts law that bans using tools to detect deception in hiring.

The case was allowed to move forward, but it ended in a private settlement in 2024, so we don’t know the outcome details.

Why this matters:

- The AI was making judgments about personality and honesty based on facial and voice data — something that isn’t scientifically reliable.

- These systems are known to be less accurate for non-white people.

- Candidates often didn’t fully understand how they were being assessed or that AI was scoring them at all.

Case 4 — University of Washington Résumé Study (US, 2024)

Status: Published academic research

Researchers tested how AI systems rank job applicants by sending in over 550 identical résumés — the only difference was the names, which signalled different races and genders.

The results were pretty clear:

- Résumés with white-associated names were preferred 85% of the time

- Black male-associated names were never ranked higher than white male ones

- Equal treatment happened in only about 6% of cases

They also found that even if you remove names, other details (like postcode, wording, or affiliations) can still signal someone’s background and influence AI decisions.

Why this matters:

Simply “hiding names” isn’t enough to stop bias. AI can still pick up indirect clues about a person’s identity.

In a New Zealand context, things like Māori names, te reo Māori, or affiliations with Māori or Pasifika organisations could act as similar signals — and potentially lead to biased outcomes.