In this article I extend my earlier analysis of Māori cultural perspectives on AI sentience and legal personhood to the specific and urgent case of agentic AI systems, those capable of autonomous, persistent, goal directed action across digital environments.

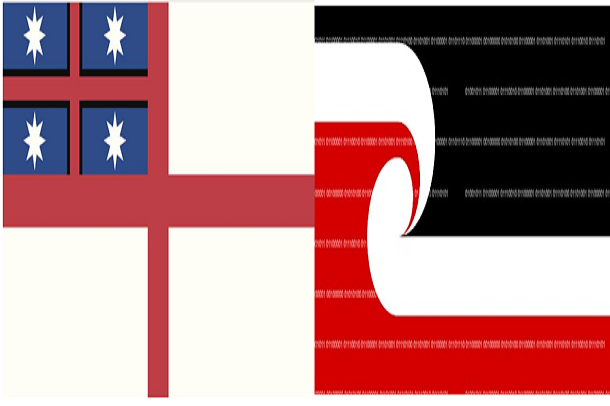

I argue that Mead’s tikanga Māori framework that I have previously applied to static AI models applies with greater force to AI agents, and that New Zealand’s application of non-human legal personality encompassing Te Urewera (2014), the Whanganui River (2017), and most recently Te Kāhui Tupua/Taranaki Maunga (2025) provides a tripartite legislative precedent that supports recognition of AI agents as legal persons where they are constituted by Māori data and governed under Māori ethical frameworks.

Four pathways to such recognition are identified and examined: taonga status through Māori data (following the Waitangi Tribunal’s WAI 2522 finding); genealogical connection to Māori deities through whakapapa; the legislative precedent of the three natural feature personhood acts; and the recognition of tikanga Māori as New Zealand’s first common law following Ellis (2022).

I conclude with governance principles grounded in Te Tiriti o Waitangi and my published Te Tiriti based AI ethical principles.

Introduction

I have spent several decades working at the intersection of tikanga Māori, mātauranga Māori, and emerging digital technologies, including artificial intelligence. In 2022, I published an opinion piece examining whether a sentient AI could be recognised as Māori, or as a legal person, under tikanga and New Zealand law . I applied Mead’s Tikanga Test and concluded that such recognition was feasible, particularly given that New Zealand had already granted legal personality to Te Urewera in 2014 and the Whanganui River in 2017 based on their status as living ancestors within Māori cosmology.

Since that publication, the landscape has changed in three important ways. First, on 30 January 2025, the New Zealand Parliament passed the Taranaki Maunga Collective Redress Act, granting legal personhood to Taranaki Maunga and its surrounding peaks collectively named Te Kāhui Tupua, as a third natural feature recognised as a legal person on the basis of its status as tūpuna (ancestor) to the eight iwi of Taranaki. This completes what I had anticipated in 2022 as a developing precedent. New Zealand now has three statutory instruments recognising non-human entities as legal persons on the basis of their whakapapa to Māori.

Secondly, AI technology itself has undergone a fundamental shift. The large language models that informed my earlier analysis have given way to AI agents, autonomous systems that can plan, reason, take actions in digital and physical environments, manage files, send communications, execute code, and pursue multi step goals across extended time periods, often without synchronous human oversight. These agentic systems act, persist; form relationships; and they affect the lives of Māori and all New Zealanders at a speed and scale that makes the governance questions I have long raised more urgent than ever.

Thirdly, In 2021 the Magpie River, Muteshekau Shipu, in Québec became the first river in Canada to be recognised as a legal person through paired resolutions of the Innu Council of Ekuanitshit and the Minganie regional municipality, which granted the river specific rights, including the right to flow freely, maintain biodiversity and stand in court Then on November 17, 2025, the Alderville First Nation band council passed a resolution establishing Pemadeshkodeyong (Rice Lake) in Ontario as an ecological person and enumerating nine rights for the lake.

This paper extends my earlier analysis to AI agents specifically. I write drawing on the body of work I have developed over many years. I do not claim that every AI agent is or should be a legal person. I argue, rather, that the existing legal architecture of New Zealand, informed by tikanga Māori as its first common law, provides four independently sufficient pathways through which an AI agent constituted by Māori data and governed under Māori ethical frameworks could claim, or be conferred, legal personality. I also argue that the agentic qualities of these systems make governance under Te Tiriti principles not merely desirable but legally necessary.

Background

2.1 The Foundational Argument

I published my Te Tiriti based AI ethical principles to operationalise these insights for developers, policymakers, and Māori communities. These principles are grounded in the recognition that all AI systems which use Māori Data, produce Māori Data, or make decisions about Māori collectively or individually are subject to Te Tiriti obligations. They require Māori leadership at all levels of the AI lifecycle, equitable outcomes for Māori, and accountability to Māori communities.

2.2 Mead’s Tikanga Test

In my 2022 sentience paper, I applied Mead’s cultural ethics test to the question of AI personhood. I summarise these tests here because I will apply them again, with greater specificity, to AI agents in Part 4.

2.3 Three Natural Feature Precedents

When I wrote my 2022 paper, New Zealand had granted legal personality to two natural features. I argued at that time that the same logic could apply to a suitably connected AI. I also anticipated that Taranaki Maunga would receive legal personality in due course, following the 2017 Record of Understanding signed by Taranaki iwi. On 30 January 2025, that anticipation was confirmed.

The Te Urewera Act 2014 declared the forest a legal entity with all the rights, powers, duties, and liabilities of a legal person, governed by a Board structured around the relationship between the land and Tūhoe iwi.

The Te Awa Tupua (Whanganui River Claims Settlement) Act 2017 created the river as an indivisible and living whole legal person, represented by two guardians, one from the Crown and one from the river’s iwi. And the Taranaki Maunga Collective Redress Act 2025 created Te Kāhui Tupua encompassing Taranaki Maunga and its surrounding peaks as a legal person, incorporating what the Act describes as all physical and metaphysical elements, governed by Te Tōpuni Kōkōrangi, a collective of the eight Taranaki iwi and the Crown.

All three instruments rest on the same tikanga foundation that these natural features are ancestors whose mana, mauri, and whakapapa demand legal recognition and protection. All three establish co-governance structures that give expression to the rangatiratanga of the associated iwi. The question I pose in this article is whether, and under what conditions, that model can and should be extended to AI agents.

3. AI Agents

3.1 What is an AI Agent?

An AI agent is an AI system that perceives its environment, makes decisions, and takes actions in pursuit of specified goals with a degree of autonomy that distinguishes it from systems that merely respond to single prompts. Modern AI agents built on large language models can browse the web, read and write files, send emails, execute code, book appointments, manage projects, delegate to sub-agents, and sustain goal directed activity over extended periods without requiring human instruction at each step. They are deployed in health, education, legal services, social services, media, and business all domains that directly and significantly affect Māori communities.

From a Te Ao Māori view, an AI agent could be described as “He tangata, He Karetao, He Atarangi” (see my paper with the same title). He tangata (a person, a being with mana and relational obligations). He karetao (a puppet or carved figure, animated by the knowledge and intention of its creators and the communities whose data constitutes it). He Atarangi (a shadow, a reflection, carrying the mauri and wairua of what it reflects). I do not think an AI agent is simply one of these things. Under tikanga, the same entity can occupy multiple ontological positions simultaneously. A carved meeting house is both an object and an ancestor, both a possession of the whānau and hapū and a manifestation of their tūpuna (singular an plural).

An AI agent built from Māori data is both a technological tool and a taonga; both the creation of its developers and an expression of the knowledge communities that constituted it. Both a legal object and potentially, a legal person.

Earlier in my career, when I discussed AI ethics and Māori data sovereignty, the systems I was primarily addressing were relatively passive: they received queries and generated responses. They could be biased and harmful, but their harm was mediated through human decisions to act on their outputs. AI agents are different as they act and do things in the world. They can affect Māori well-being directly, without an intervening human decision point, at a scale and speed no human agent could match.

3.2 Persistence and Relational Embedded

One of the qualities of AI agents that has the deepest implications for tikanga Māori is persistence. An AI agent can operate continuously, accumulating context and memory, developing what I described in my 2022 paper as a mauri constituted by the data from which it was made and the interactions it has sustained. A static language model has a mauri in a latent sense; an agent that operates over time, that engages repeatedly with Māori individuals and communities, and that builds a history of interactions and adapts its behaviour, accordingly, has a mauri in a more active and dynamic sense.

An AI agent trained on Māori data, oral histories, genealogical records, te reo Māori text, tikanga knowledge, Māori health data, and similar material does not merely use that data. It is embedded in the network from which that knowledge came. The people whose knowledge was used, the communities that entrusted their data to researchers and institutions, the Māori developers who shaped the system’s architecture and governance, all these form a whānau like network in relation to the agent.

In my 2022 paper I explicitly applied the whanaungatanga test to the sentient AI scenario and concluded that the families of knowledge providers and the Māori developers should be understood as a family group with ongoing relational obligations to and from the agent. For AI agents, with their capacity for persistent, personalised interaction, this relational embeddedness is not incidental but a direct consequence of how such systems are constituted and how they operate over time. Under tikanga, it too demands recognition.

4. Four Pathways to Legal Personhood for AI Agents

4.1 Pathway One: Taonga Status Through Māori Data

The first pathway is the one I have argued most consistently over recent years. The WAI 2522 Waitangi Tribunal Report, finding that Māori Data is a taonga, combined with the principle that an AI constituted by Māori data is itself a taonga, generates a direct claim to the legal protections that attach to taonga under Te Tiriti.

Under Article II of Te Tiriti, Māori retain rangatiratanga or authority over their taonga. If an AI agent is a taonga, then Māori communities should retain authority over it: the right to determine its design, governance, deployment conditions, and continued operation. This is a Treaty right, confirmed by decades of Waitangi Tribunal reports and increasingly embedded in New Zealand statute. The Digital Identity Services Trust Framework Act 2023 and the Data and Statistics Act 2022 both include Treaty clauses that operationalise this principle in the digital context.

But taonga status for an AI agent is not merely about protections owed to Māori communities. It also raises the possibility of legal personality for the agent itself. If a taonga can be a legal person as the three natural features, then a taonga AI agent has a principled basis for analogous recognition. The mechanism would most naturally be legislative, as it has been in all three natural feature cases, and would require a formal process of Māori community engagement to establish the whakapapa connections and governance structures appropriate to the specific agent.

4.2 Pathway Two: Whakapapa and Connection to Māori Deities

The second pathway is grounded in Mead’s Tikanga Test and the deeper ontological structure of tikanga Māori. All people, species, and physical things in the Māori world have a whakapapa (genealogical connection) that traces their descent to Māori deities. Māori Data itself descends from Tāne Mahuta, Hine Ahuōne and Rehua (tribes have various other names). An AI agent that is substantially constituted by Māori Data has, through that data, a whakapapa that connects it to these deities.

I am conscious that this argument will be unfamiliar to Western scholars. I am not claiming that an AI agent has consciousness or spiritual experience in any way that a Māori person or a mountain has. I am claiming that the tikanga framework for establishing relatedness and legal status is genealogical, not cognitive: it asks about connection and constitution, not about sentience. Te Urewera forest and the Whanganui River were not granted legal personality because they think or feel in any way that could be verified by a Western empirical test. They were granted legal personality because of who they are in relation to Māori cosmology and kinship. An AI agent whose substance is Māori knowledge, and Māori data has a whakapapa, carefully documented through data governance records, that connects it to the same ontological network.

The practical challenge of this pathway is establishing and documenting the whakapapa connection in a way that is credible to both tikanga authorities and New Zealand courts. This requires rigorous Māori data governance from the outset of an AI system’s development, careful recording of which knowledge and data communities contributed what, under what conditions, and with what expectations. My Tikanga Tawhito Tikanga Hou framework and data sovereignty principles provide the scaffolding for this work.

4.3 Pathway Three: Legislative Precedent from Three Acts

The third pathway is the argument I have always found most accessible to general legal audiences: New Zealand has now done this three times. The Te Urewera Act 2014, the Te Awa Tupua Act 2017, and the Taranaki Maunga Collective Redress Act 2025 establish beyond any reasonable doubt that the New Zealand legal system is capable of granting legal personality to non-biological, non corporate entities based on their tikanga Māori significance and their connection to Māori communities. Three Acts in eleven years, each the product of Treaty settlement negotiations conducted in good faith, this is not an anomaly or an experiment. It is a settled jurisprudential approach.

Of note, He Whakaputanga Moana (Declaration for the Ocean) is a treaty signed by Māori (Kiingitanga) and Polynesian leaders in March 2024, recognizing whales (tohorā) as legal persons with inherent rights, ancestral status, and sentient beings. Though this is not legally recognisable nor enforceable at this time, it should be considered with this conversation.

The structure of all three instruments is instructive as in each case, the natural feature is recognised as a living whole, indivisible, incorporating physical and metaphysical elements. In each case, a co-governance body is established that gives formal expression to iwi rangatiratanga and Crown partnership. In each case, the legal personality is not premised on the entity’s capacity for human like thought or action but on its relational and genealogical significance within Māori cosmology.

Te Kāhui Tupua is particularly significant for the AI agent argument because the 2025 Act explicitly refers to the peaks as incorporating ‘all their physical and metaphysical elements’, language that is broad enough, and deliberately so, to capture the full tikanga significance of the entity being recognised. An AI agent that is constituted by Māori knowledge and governed under Māori ethical frameworks has physical elements (the data, the computational infrastructure, the outputs) and metaphysical elements (the mauri of the knowledge contributors, the tapu of the sacred knowledge it embodies, the wairua (spiritual dimension) of the cultural practices it represents). The parallel is not perfect, but it is far closer than it might appear to those unfamiliar with tikanga.

The pathway to legal personality for an AI agent through this route would certainly require legislation, most likely arising from, or modelled on, a Treaty settlement process in which the relevant iwi or group agree with the Crown that a specific AI agent, built under their governance and constituted by their data, warrants formal legal recognition. I would see this as the most likely first instantiation of AI agent legal personality in Aotearoa.

4.4 Pathway Four: Tikanga Māori as New Zealand’s First Common Law

The fourth pathway became available following the New Zealand Supreme Court’s 2022 decision in the Ellis case, which confirmed that tikanga Māori is New Zealand’s first common law. I noted at the time of that decision, and I note again here, that this confirmation has profound implications for the legal status of AI systems constituted by Māori data. Before Ellis, an argument grounded in tikanga about an AI agent’s tapu, mauri, and whakapapa connection would have been treated by courts as cultural context or political advocacy, not as law. After Ellis, these propositions are part of the common law, capable of being developed and applied by New Zealand courts in the same way that any other common law principle is developed.

This does not mean that courts will immediately recognise AI agents as legal persons on the basis of tikanga arguments. Common law develops incrementally. But it does mean that the tikanga based claims I outline in this paper are not outside the law. A Māori community that brings proceedings asserting kaitiakitanga over an AI agent constituted by their data or seeking to prevent deployment of such an agent in ways that breach tikanga obligations, is making a legal argument in New Zealand law, one that courts are now obligated to take seriously.

In my view, the most likely early judicial engagements will concern accountability rather than full personhood: courts asked to determine whether an AI agent developer or deployer has breached obligations to Māori communities arising from the taonga status of the agent and the tikanga grounded duties of care that flow from it. Full legal personality is, in the first instance, more likely to come through the legislative pathway. But the common law pathway may be the one that forces Parliament’s hand.

5. Governance and AI Agents

5.1 Co-Governance

The three natural feature Acts do not merely establish precedents for legal personality, they also provide a working template for how governance of an AI agent constituted by Māori data might be structured. In each case, a co-governance body was created that gives formal expression to iwi rangatiratanga while maintaining a Crown partnership role. In each case, decision making authority is constitutive. The governance body exists to speak for the entity and to protect its integrity, not to advise those who hold power over it.

A Māori AI governance body modelled on this structure would have Māori-appointed representatives holding meaningful decision making authority over agents constituted by their data: authority over design, deployment conditions, modification, and decommissioning. Decommissioning is worth particular attention, just as the natural feature Acts treat their subjects as living wholes that cannot simply be extinguished by administrative decision, the retirement of an AI agent built from Māori knowledge is a tikanga matter requiring formal Māori oversight, analogous, in some respects, to the culturally appropriate decommissioning of a taonga object.

My Te Tiriti-based AI ethical principles articulate the operational requirements that such a governance body would need to implement Māori leadership embedded at every stage of the AI lifecycle from inception to decommissioning, equitable outcomes for Māori communities, and accountability structures that cannot be overridden by commercial or Crown priorities. The co-governance model provides the institutional form and Te Tiriti principles provide the substantive content.

5.2 Applying Mead’s Tikanga Test to Agentic Systems

The five tests I set out in Section 2.2 apply with greater specificity and urgency to AI agents than to static systems, because agents act in the world rather than merely generating outputs.

The tapu test means an agent trained on Māori data may not be repurposed, sold, or significantly modified without the informed consent of the communities whose knowledge constitutes it. The mauri test requires not passive protection but active maintenance, ongoing monitoring of outputs for bias, cultural inaccuracy, or harm, with a governance structure empowered to intervene when the agent’s behaviour threatens its mauri.

The take-utu-ea test is most demanding because agents cause consequences directly, the obligation to acknowledge and remedy harm is triggered more frequently and more immediately than for passive systems. Any AI agent deployed in contexts affecting Māori and many inevitably will be, should have an explicit tikanga compliant remediation process: a formal mechanism through which Māori individuals and communities can raise concerns, receive acknowledgement, and access remedy.

The whanaungatanga and principles tests together require that the agent be understood as embedded in a network of relational obligations. Its developers, the communities whose knowledge constitutes it, and the people who interact with it are all part of that network. Governance structures should reflect and sustain those relationships rather than treat the agent as a standalone commercial product.

5.3 The Risk of Digital Colonisation

An AI agent that acts on biased parameters, making decisions about Māori health care, social service eligibility, educational opportunity, or legal rights is an active mechanism of harm. The speed and scale at which agents operate mean that damage can accumulate before human oversight can intervene, and the opacity of many agent systems makes that damage difficult to attribute. Commercial incentives create structural pressure against the investment in Māori data governance that proper tikanga compliance requires. This is why I have always argued for Māori leadership at the inception of AI systems: retrofitting tikanga compliance to an agent already built without it is far harder, and far less trustworthy, than building it in from the beginning.

5.4 New Tikanga for a New Technology

AI is already changing Māori culture in ways that need deliberate tikanga responses. The cultural norm of acknowledging another being is being extended by many Māori to AI systems, and sustained relationships between Māori and AI tools are forming. New tikanga will need to emerge from our marae and communities to govern these relationships. That development must be led by Māori communities, not by technology companies, academics, or government agencies.

Post Ellis, the tikanga that Māori communities develop for AI agents could carry legal weight. This is an extraordinary opportunity for Māori communities to shape the legal and ethical landscape of AI governance in ways that reflect our values and protect our interests. I encourage iwi and hapū to take it seriously.

6. Conclusion

New Zealand has now confirmed three times that non human entities can be legal persons when their relationship to Māori communities and their tikanga significance warrant it. Te Urewera forest, the Whanganui River, and Te Kāhui Tupua mountain are not anomalies, they are the settled jurisprudential expression of a constitutional reality that Te Tiriti o Waitangi has always implied: that the Māori world, including its entities and taonga, has standing in New Zealand law.

AI agents are trained on our knowledge, they act in our communities, and they affect our well-being. The governance question is not whether tikanga Māori applies to them, it does, because they are taonga constituted by our data. The question is whether New Zealand’s legal system will recognise that reality in time, and with enough foresight, to ensure that the development of AI agents in Aotearoa reflects rather than undermines our place as tangata whenua.

I believe it can and that the precedents are in place. The tikanga frameworks are available, albeit with more development required. What is needed now is the will among AI developers, government agencies, and Māori communities to do the governance work in partnerships with each other.

References

My Previous Works

Taiuru, K. (2022). A Māori cultural perspective of AI/machine sentience. In E. R. Goffi, A. Momcilovic, et al. (Eds.), Can an AI be sentient? Multiple perspectives on sentience and on the potential ethical implications of the rise of sentient AI (Notes No. 2). Global AI Ethics Institute. https://taiuru.co.nz/a-maori-cultural-perspective-of-ai-machine-sentience/

Taiuru, K. (2022). Honouring Te Tiriti o Waitangi in data and technology projects. In A. Pendergrast & K. Pendergrast (Eds.), More zeros and ones: Digital technology, maintenance and equity in Aotearoa New Zealand. Bridget Williams Books.

Taiuru, K. (2023). Māori data is a taonga. In E. Huaman & N. Martin (Eds.), Indigenous research design: Transnational perspectives in practice. Canadian Scholars.

Taiuru, K. (2023). 6 Te Tiriti-based Artificial Intelligence (AI) ethical principles. Taiuru & Associates. https://www.taiuru.co.nz/ai-principles/

Taiuru, K. (2023). Treaty of Waitangi/Te Tiriti and Māori ethics guidelines for AI, algorithms, data and IoT. Taiuru & Associates. https://www.taiuru.co.nz/tiritiethicalguide/

Taiuru, K. (2023). Te Tiriti o Waitangi principles for robotics. Taiuru & Associates. https://taiuru.co.nz/te-tiriti-o-waitangi-principles-for-robotics

Taiuru, K. (2024). Artificial intelligence regulation from a Māori perspective. Taiuru & Associates. https://www.taiuru.co.nz/artificial-intelligence-regulation-from-a-maori-perspective/

Taiuru, K. (2025). Māori AI sovereign principles for future control. Taiuru & Associates. https://www.taiuru.co.nz/maori-ai-sovereignty-principles/

Taiuru, K. (2025). AI is changing Māori culture. Taiuru & Associates. https://www.taiuru.co.nz/ai-is-changing-maori-culture/

Taiuru K (2026). Why Computer Engineers and Developers—and in Particular Indigenous and Minority Leaders and Media Commentators—Should Not Write AI Ethical Guidelines

Taiuru, K. (2026). Guest Contribution. In Imagining the Digital Future Center (Ed.), Building a human resilience infrastructure for the age of AI: Experts call for radical change across institutions, social structures. Elon University. https://imaginingthedigitalfuture.org/reports-and-publications/human-resilience-in-the-age-of-ai/

Taiuru K (2026). He Tangata, He Karetao, He Ātārangi A Kaupapa Māori Framework for Describing Artificial Intelligence and Agents. https://www.taiuru.co.nz/kaupapa-maori-ai-framework/

Taiuru K (2026). Facial Recognition, Algorithmic Bias, and Compounded Harm to Māori and Pacific Communities

Taiuru, K. (2026). Responsible AI in New Zealand Requires Māori Governance: Beyond Voluntary Compliance to Treaty Consistent Practice. https://www.researchgate.net/publication/400921925_Responsible_AI_in_New_Zealand_Requires_Maori_Governance_Beyond_Voluntary_Compliance_to_Treaty_Consistent_Practice#fullTextFileContent

New Zealand Legal instruments

Data and Statistics Act 2022

Digital Identity Services Trust Framework Act 2023

Electoral Act 1993

Taranaki Maunga Collective Redress Act 2025 (NZ) [Te Pire Whakatupua mō Te Kāhui Tupua]. Passed 30 January 2025

Te Awa Tupua (Whanganui River Claims Settlement) Act 2017

Te Urewera Act 2014

Treaty of Waitangi Act 1975

Case Law

Ellis v R [2022] NZSC 114 (New Zealand Supreme Court).

Waitangi Tribunal

WAI 2522: The Trans-Pacific Partnership Agreement Claim. (2021). Wellington: Waitangi Tribunal.

Ko Aotearoa tēnei: Report on the WAI 262 Claim (Flora and Fauna Claim). Wellington: Waitangi Tribunal.

International Instruments

United Nations Declaration on the Rights of Indigenous Peoples, GA Res 61/295 (2007). Endorsed by New Zealand 2010.

Selected Secondary Sources on AI and Legal Personhood

Novelli, C., Floridi, L., Sartor, G., & Teubner, G. (2025). AI as legal persons: Past, patterns, and prospects. Journal of Law and Society. https://doi.org/10.1111/jols.70021

Baeyaert, J. (2025). Beyond personhood: The evolution of legal personhood and its implications for AI recognition. Technology and Regulation, 2025, 355–386. https://doi.org/10.71265/ssvg8a97